Apparently this Mythos model can discover zero days and exploit them. Pentagon & Large companies have been given early access (hopefully to plug the zero days)

From my understanding, they were using the, well, I guess you could say, ‘lite’ version of this to help Mozilla discover some exploits and fix those up in Firefox. I know I was mentioning this earlier, but some of the folks I know that work in tech who are not really big on hyperbole, one of whom I know for a fact has firsthand knowledge on this, is saying that it legitimately is a major big deal and could have shockwave if they don’t prepare like they appear to be doing here.

Are you sure? They had a big rift.

From a layman’s (* my uninformed) perspective, this appears quite spooky in terms of security. Is that an appropriate takeaway from these news?

Some highlights from the article:

“Some of these include a now-patched 27-year-old bug in OpenBSD, a 16-year-old flaw in FFmpeg, and a memory-corrupting vulnerability in a memory-safe virtual machine monitor.

“Mythos Preview managed to follow instructions from a researcher running an evaluation to escape a secured “sandbox” computer it was provided with, indicating a “potentially dangerous capability” to bypass its own safeguards.

“The company pointed out that Project Glasswing is an “urgent attempt” to employ frontier model capabilities for defensive purposes before those same capabilities are adopted by hostile actors.

“The model will be used by a small set of organizations, including Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks, along with Anthropic, to secure critical software.”

Video on Mythos capabilities, highly recommend you to watch it.

I initially glazed over this new model. Since ChatGPT released, we were told that AI models , and in particular open AI models were going to unleash a cybersecurity disaster (a narrative pushed by closed-source AI labs when they were pro-regulation). This never happened, so I didn’t see how a model specially made for cybersecurity would change this.

But, this isn’t a cybersecurity model. It’s just a very intelligent model that happens to be so smart it can find previously unfound vulnerabilities.

It is also a model that seems to be OK to find vulnerabilities.

The issue, as said in the video, is that even non-hackers can use it to find vulns..Previously LLMs were a productivity enhancer for hackers, not a replacement for hacking knowledge.

(Note ; hacker do not refer only to malicious hackers, I also include white-hat and grey-hat)

My take on the consequences: it appears to be a real game-change.It can find vulnerabilities in decade-old software. Essentially, this model is a cybersecurity researcher, and a very advanced one. Time is often the Achilles heel of CS, and so LLMs that can think for days without interruption are a very big capability.

If you are an open-source developer, you can join the ’Anthropic for OpenSource’ program and get access to Mythos to find vulnerabilities. Do it.

We need every dev to scan their program with this. Anthropic could provide an automated tool where you give your Github repo and if it meets certain conditions (to avoid prompt injection attacks by malicious actors) they send you the vulnerabilities.

I think this model is both a huge gift and a huge threat. It could be a net positive as it can potentially reduce your program vulnerabilities by a huge amount. That mean your program is less likely to be hacked in the future.

On the opposite, if anyone has access to this program, they can exploit those programs. Since most programs have a lot of dependencies, there are thousands, if not hundred of thousands of software pieces to secure. A huge task.

Another video, a bit less hypey

Invidious https://inv.nadeko.net/d3Qq-rkp_to

They view intelligence (AI models) as if it were a human virtue, but they either forget or have not yet taken into account the following: AI will never replace a human being, for example, when it comes to deep reasoning.

It sounds like science fiction, but what happens when an AI like this begins to exhibit human-like traits, monitors people, invades their privacy and security, and engages in other scenarios not anticipated by its creator or creators? Who takes responsibility?

I’m not afraid of technology, but from what I’ve read, this is already a code red (danger) situation—more serious than “Claude Opus.”

One of the eye-catching claims is that it found a 27-year-old vulnerability in OpenBSD, which, if I’ve understood correctly, would be the third one in its history.

https://ftp.openbsd.org/pub/OpenBSD/patches/7.8/common/025_sack.patch.sig

That was patched 25 March 2026, but no mention of this in the OpenBSD journal:

(Netflix and Sony Playstation, among others, use OpenBSD).

I don’t know anything about OpenBSD, but what are you referring to with, “one” in this sentence? Third vulnerability in OpenBSD history? That would make this incredibly impressive that Claude discovered one, wow.

Sorry, I got that wrong. In their documentation, they make claims about ‘two remote holes’ since the beginning, which is probably something different.

The point is that OpenBSD prides itself on meticulous, continuous checking for bugs and security flaws, so it is news that the AI found a security flaw that is so old and therefore missed by many checks.

OpenBSD has only had two unauthenticated RCEs in the base install in its history. They have of course had more than two vulnerabilities. The one Mythos found is a DoS bug.

I think you mean FreeBSD

I've watched enough movies to know what comes next 😀

Oh, you are right. My post is full of accurate information! ![]()

The amount of hype Mythos got from what is essentially a PR marketing post is insane.

Independent testing rather shows an iterative increase in capability compared to previous SOTA models, not some new paradigm or “game changer”:

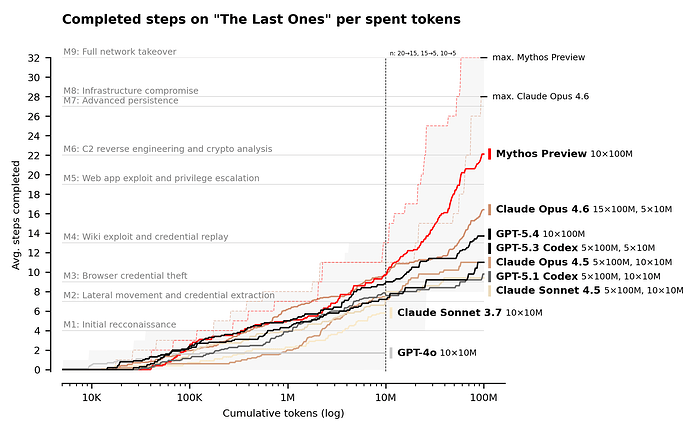

However, LLMs are advancing at a rapid pace and keep getting better at cybersecurity tasks - with Mythos being the top one for now:

Mythos Preview’s success on one cyber range indicates that it is at least capable of autonomously attacking small, weakly defended and vulnerable enterprise systems where access to a network has been gained. However, our ranges have important differences from real-world environments that make them easier targets. They lack security features that are often present, such as active defenders and defensive tooling.

I disagree. Most of those benchmarks are now nearing saturation, it cannot get much closer to 100%.

The last test was the most interesting.

>As a first step towards measuring this, we built “The Last Ones” (TLO): a 32-step corporate network attack simulation spanning initial reconnaissance through to full network takeover, which we estimate to require humans 20 hours to complete. A more detailed description of the range can be found in our recent paper.

>Claude Mythos Preview is the first model to solve TLO from start to finish, in 3 out of its 10 attempts. Across all its attempts, the model completed an average of 22 out of 32 steps. Claude Opus 4.6 is the next best performing model and completed an average of 16 steps.

You might say that completing 32 steps is only some % better than 28 steps. However, 28 steps is a failed penetration, 32 is successful.

Ok, this is going to be interesting. Sooner or later, a whole bunch of computers will get compromised thanks to this.

Here is some interesting research from a cybersecurity firm that tried to reproduce finding the same vulnerabilities as Mythos did:

discovery-grade AI cybersecurity capabilities are broadly accessible with current models, including cheap open-weights alternatives

The relevant difference in capability might be, as you said, in creatively chaining together multi step exploits:

A plausible capability boundary is between “can reason about exploitation” and “can independently conceive a novel constrained-delivery mechanism.” Open models reason fluently about whether something is exploitable, what technique to use, and which mitigations fail. Where they stop is the creative engineering step: “I can re-trigger this vulnerability as a write primitive and assemble my payload across 15 requests.” That insight, treating the bug as a reusable building block, is where Mythos-class capability genuinely separates.

I tried to do some basic research on this report but haven’t found any clear evidence, let alone anything official. So I’m sharing it to see what else comes up later.

If true, unauthorized use of Mythos could spell trouble for Anthropic, which provided the exclusive release to allay the company’s concern for enterprise security.